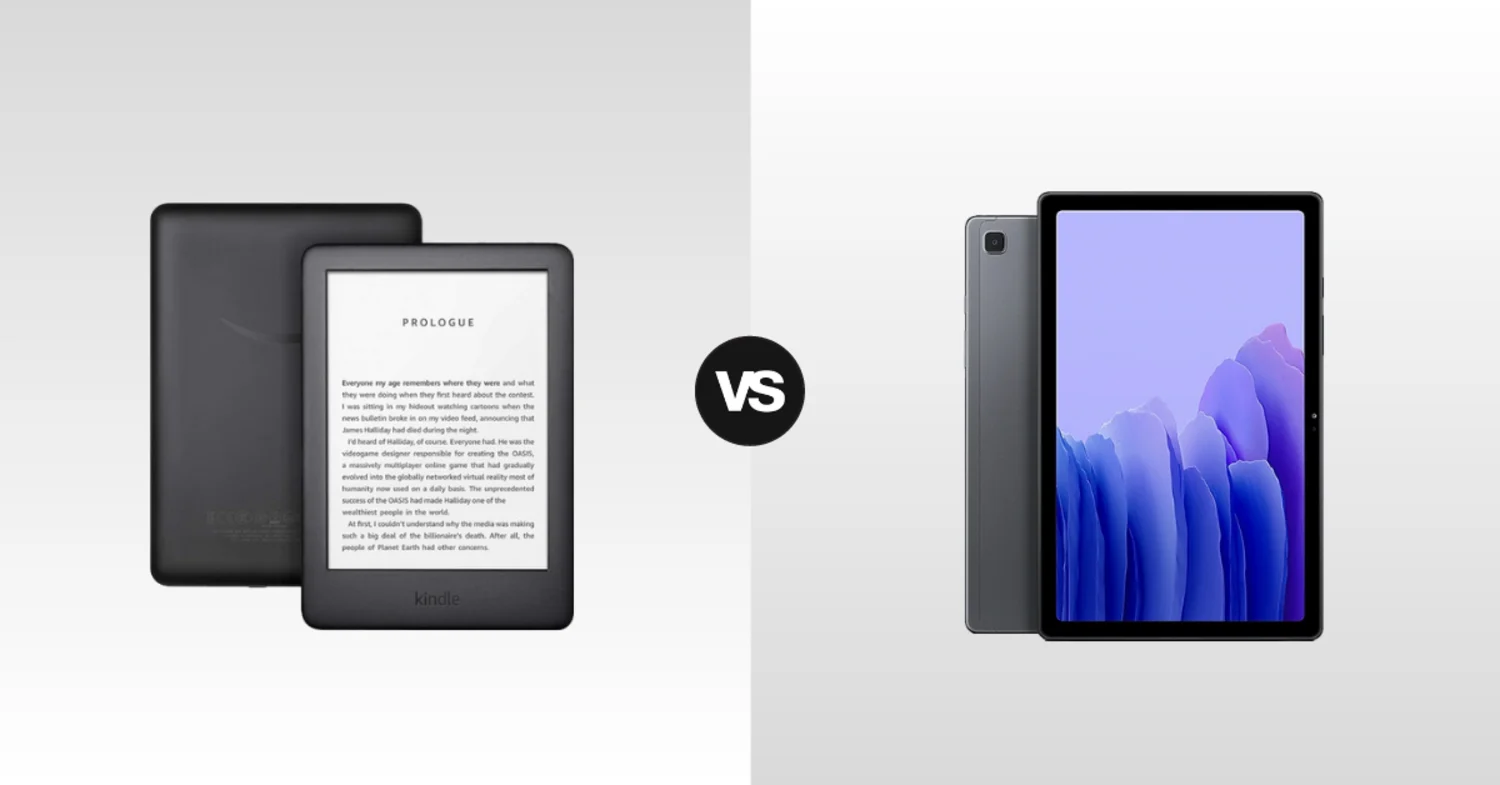

In an age of digital everything, the way we read has become a battleground of screens. On one side, we have the specialized e-reader, a device dedicated to one thing: simulating the experience of reading ink on paper. On the other, we have the versatile tablet, a multi-purpose screen capable of displaying books alongside apps, videos, and games. For the avid reader, the choice seems simple, but it’s fraught with trade-offs. Do you prioritize the health of your eyes and battery life, or do you value the flexibility and color of a full-fledged computing device? Let’s dive deep into the page-turner of a debate.

E-Readers vs. Tablets, Which Device is Better for the Avid Reader?

The Case for the E-Reader: The Paper-Like Experience

The e-reader’s greatest strength lies in its screen technology: E Ink (electronic ink). Unlike the backlit LCD or OLED displays found on tablets, E Ink screens are reflective. They work by using tiny microcapsules filled with charged black and white particles that rise or fall to the surface to form text and images. Because they don’t emit light directly, they cause significantly less eye strain during long reading sessions. This makes them ideal for reading in bed before sleep, as they lack the blue light that can disrupt your circadian rhythm. Reading outdoors, where a tablet’s screen becomes a washed-out mirror, is where E Ink truly shines; the brighter the sunlight, the clearer the text becomes.

Beyond the screen, e-readers offer a distraction-free sanctuary. There are no notifications buzzing, no tempting app icons, and no YouTube rabbit holes to fall into. When you pick up a Kindle or a Kobo, you are there to read, and that focus is a precious commodity in the modern world. Battery life is another superpower. Because E Ink screens only use power when changing the page (not to maintain a static image), an e-reader can last for weeks, sometimes months, on a single charge. You can take it on a two-week vacation without even packing the charger. They are also typically lighter and smaller than most tablets, making them more comfortable to hold for hours, especially with one hand. For the purist who simply wants to read books, the e-reader is a beautifully focused tool.

The Case for the Tablet: The Multimedia Library

The tablet, however, offers a completely different proposition. It is not a single-purpose tool; it is a multimedia library. With a tablet, you aren’t limited to just text. You can read full-color comic books and graphic novels in all their vibrant glory, with panels that pop off the screen. Magazine layouts, cookbooks with photos, and illustrated children’s books come to life in a way they simply cannot on a monochrome E Ink display. If you’re reading a non-fiction book and encounter a term you don’t know, you can instantly switch to a web browser or a dictionary app for deeper research.

Furthermore, a tablet allows you to integrate other media into your “reading” experience. You can switch between reading an ebook and watching a documentary about the same subject. You can listen to an audiobook from the same device while doing chores. For students or researchers, the ability to annotate PDFs directly on the screen with a stylus, organize files in folders, and access cloud storage is invaluable. While modern e-readers have added some rudimentary web browsing and note-taking, they are painfully slow and clunky compared to the fluid, responsive experience of an iPad or a Samsung Galaxy Tab. The tablet offers a richer, albeit more distracting, ecosystem for the modern reader.

The Middle Ground: Do You Have to Choose?

Interestingly, the lines are blurring. The latest generation of devices is attempting to offer the best of both worlds. Remarkable tablets like the reMarkable 2 and the Kindle Scribe focus on note-taking and PDF annotation with a large E Ink screen and a stylus, blurring the line between e-reader and digital notebook. Meanwhile, tablets are getting better reading features. Features like “True Tone” ambient light adjustment on the iPad and specialized reading apps that can dim the screen and invert colors (dark mode) help reduce eye strain. However, no amount of software trickery can turn an emissive display into a reflective one. If you read for hours on a tablet, your eyes will still tire faster than they would on an E Ink screen.

The Verdict: The Avid Reader’s Choice

So, which one wins? It depends entirely on what you read and how you read.

If you are a novel lover, a commuter, or a bedtime reader who consumes primarily text-based books for hours on end, the choice is clear: get an e-reader. The eye comfort, the lack of distractions, and the incredible battery life make it the superior tool for the job. It’s a dedicated device for a dedicated purpose.

If you are a student, a researcher, a comic book fan, or someone who reads a mix of books, PDFs, and web content, you will likely be frustrated by the limitations of an e-reader. For you, a tablet is the better choice. It offers the versatility to handle all forms of written and visual media, even if it means sacrificing some eye comfort and gaining a few notifications. In the end, the best device for the avid reader is the one that makes you want to read more. For some, that’s the quiet focus of E Ink. For others, it’s the colorful versatility of a tablet.